Extrasensory perception covers experiences like telepathy, clairvoyance, and precognition. This introduction lays out what modern approaches include, from classic Zener card setups to computer-driven trials.

J. B. Rhine at Duke University popularized the term and formalized lab card-guessing methods using Zener cards. Today, many platforms use pseudo-random algorithms to pick targets so probabilities stay stable across trials.

A typical test shows a target, a person makes a guess, and the system records the response. Analysts compare results to what would be expected by chance. Consistent performance across sessions matters far more than a single high run.

This guide will cover key concepts in perception types, tools like Zener cards and software, one- and two-person procedures, and how to read your numbers. It will also place reported findings in the wider world so readers can see context.

Key Takeaways

- Origins trace to J. B. Rhine and Zener card experiments at Duke.

- Modern platforms often use pseudo-random selection to keep trials fair.

- A test compares recorded guesses to chance; consistent runs matter most.

- Extrasensory perception is an umbrella for telepathy, clairvoyance, and precognition.

- The guide will explain methods, setups, and how to interpret results in context.

What ESP testing is and why it matters today

Extrasensory perception is a research term that groups several reported abilities beyond ordinary sight. It includes mind-to-mind contact (telepathy), impressions about distant targets (clairvoyance), and sensing future events (precognition).

This guide focuses on how tests are run and how to interpret results rather than proving belief. Clear procedures help separate chance from patterns and let curious people learn from their own sessions.

Researchers historically used separated sender and receiver roles and Zener-style targets to reduce cues. Many studies have returned results near random guessing, making consistent replication the key benchmark for any claimed effect.

- Keep roles separate and document each trial carefully.

- Run enough trials to avoid short-term swings.

- Approach sessions as experiments for learning, not as proofs of belief.

For an approachable overview of methods and mind-related abilities, see mind powers techniques.

Tools and targets: Zener cards, symbols, and computer programs

Practical choices—cards, symbols, and programs—define how a session behaves.

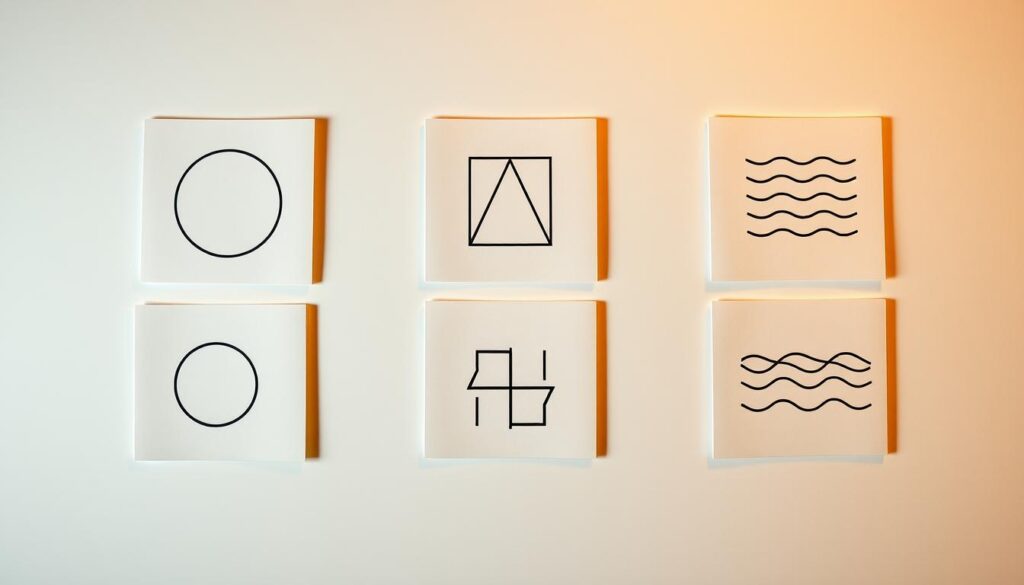

The standard set uses five Zener symbols: circle, cross, wavy lines, square, and star. Each trial picks one symbol from that set so chance levels stay clear.

The five Zener symbols and how randomization works

In modern computer runs, a pseudo-random algorithm selects the card each trial. That keeps sequences unpredictable and fair for long sessions.

Open vs. closed decks and Cards Seen vs. Cards Unseen

Open Deck acts like drawing and replacing a card. The probability for any symbol stays constant even if counts vary in your results.

Closed Deck has each symbol appear a fixed number of times. Probabilities shift as the run advances, so analysis gets more complex.

Cards Seen shows feedback after each guess with running totals. Cards Unseen hides targets and hits until the end, which reduces counting and strategy shifts.

Choosing reliable software and practical safeguards

For clear records in the United States, pick a program that documents its randomization, logs every card and guess, and allows export for review. Look for transparency about algorithms and export formats.

Critical safeguards: Closed Deck clairvoyance must use Cards Unseen. In Closed Deck telepathy, the sender must not give trial-by-trial feedback.

| Feature | Open Deck | Closed Deck |

|---|---|---|

| Probability per trial | Constant | Changes during run |

| Feedback option | Cards Seen or Unseen | Prefer Cards Unseen |

| Best for | Simple analysis, quick sessions | Controlled symbol counts, complex stats |

| Required safeguard | None specific | No trial feedback in telepathy; unseen in clairvoyance |

Start with at least 50 trials and scale toward 100–1000 as you seek stable results over the years. Clear procedures and honest logs help make your research useful.

How to run solo clairvoyance sessions

When you run a one-person clairvoyance session, the computer picks each card before you guess, keeping the trial fair.

Step-by-step setup: deck type, randomization, and trials

Choose a platform and set its mode to clairvoyance so the computer selects the target card before your response. Use Open Deck for constant odds or Closed Deck with Cards Unseen to avoid counting effects.

Start with at least 50 trials. If you want more reliable numbers, plan blocks of 100 or more and consider extending toward 500–1000 over time to see stable results beyond chance.

Best practices to avoid bias: timing, environment, and feedback

Remove distractions, silence notifications, and pick a consistent time of day. Sit comfortably, breathe, and record your first impression quickly to reduce second-guessing.

- Do not review interim feedback during the run.

- If using Closed Deck, enable Cards Unseen to prevent indirect counting.

- Export the full run—trial, target, guess, and hits—so you can compare sessions and watch for trends in results.

| Item | Recommendation |

|---|---|

| Deck type | Open Deck for simple chance comparison; Closed Deck with Cards Unseen for controlled counts |

| Trial count | 50 minimum; scale to 100–1000 for stable data |

| Record keeping | Export logs for later analysis and replication |

How to run a two-person telepathy test with a sender and receiver

A clear two-person protocol helps keep telepathy sessions fair and repeatable. Define roles up front: the sender views each computer-selected card and the receiver attempts to perceive the symbol without any visual or verbal clues.

Roles and workflow

Simple rhythm reduces mistakes. The sender notes the target silently, the receiver gives a single guess, and the sender records it immediately. Use a neutral script so phrasing, timing, or hesitation cannot hint at the answer.

Preventing cues and leakage

Keep the two people physically separate or use a partition and sound control to stop breathing, tone, or gestures from leaking information. For Closed Deck runs, do not provide trial-by-trial feedback; this prevents counting strategies that distort esp data.

Session length and breaks

Choose moderate blocks—50 to 100 trials split into short runs. Schedule brief timed breaks between blocks to reset attention and reduce fatigue. Log every trial with timestamps, role, and any notes so later review can confirm that good procedures, not accidental cues, drove results.

For practical tips on cultivating consistent practice and skill, see psychic superpowers.

Interpreting ESP test results: chance, hits, and significance

Understanding what counts as a meaningful result starts with the baseline probability. With five Zener symbols, random guessing gives an expected hit rate of 1 in 5, or 20% per trial. Compare your overall results to that baseline across the full run, not just a short segment.

Why 50 trials matters. Small runs can swing widely by luck. Computerized platforms often recommend at least 50 trials to reduce random fluctuation. Scaling to 100–1000 trials narrows uncertainty and makes any pattern more credible.

Open vs. Closed deck implications

In an Open Deck, each trial keeps the same probability for every symbol, which simplifies analysis. Closed Deck changes odds as cards are used, so hits may reflect deck dynamics rather than ability.

Reading patterns versus randomness

Streaks of hits or misses happen under chance. Look for consistent performance above the 20% baseline across multiple sessions rather than a single spike. Keep full logs—trial number, guessed symbol, target symbol, hit or miss, and timestamps—so you or others can review the data.

- Start with the baseline: 20% chance per trial with five-symbol decks.

- Use 50 trials minimum; scale to 100–1000 for more stable results.

- Prefer Open Deck for straightforward statistics; treat Closed Deck outcomes with extra care.

- Replicate any apparent deviation under similar conditions before accepting it as meaningful.

For a practical platform to record and review runs, try the psychic abilities test and export your logs for further analysis. Decades of studies often find results near chance, so careful procedure and replication are essential to meaningful research.

What studies and research say about evidence for ESP

Research into extrasensory perception has a long history, from the Society for Psychical Research in the 1880s to modern lab work.

Early formal work in the 1930s by J. B. Rhine used Zener card experiments to put symbol-based claims into a lab setting. Later methods shifted toward free-response tests that ask a person to describe concealed photos or video clips. These designs felt more natural for some participants and moved beyond fixed-card protocols.

What the papers and programs show

Many peer-reviewed studies cluster near chance, and replication issues are common. The U.S. Stargate program ran for years before reviewers concluded results did not reliably exceed chance levels.

Brain scans and controversial reports

A 2008 MRI study compared brain activity during direct viewing and attempted perception of hidden targets with a computer and found no meaningful difference. High-profile precognition claims, such as Daryl Bem’s work, drew attention but failed independent replication.

- Evolution: card labs → free-response formats.

- Mixed outcomes: many studies are inconclusive, clustering around chance.

- Replicability: independent confirmation is essential before accepting strong claims.

Controlled setups carefully separate sender and receiver, blind targets, and log every trial. When a study shows above-chance hits with cards or free-response material, the next step is independent teams repeating the same procedures. For related methods on mind-matter interaction, see what are PK abilities.

Conclusion

Practical procedure and steady records make it simpler to judge any claimed ability. Keep runs clear: choose deck and feedback settings, set a trial count (50+), and export full logs so each person can compare sessions over time.

Consistency matters more than a single striking event. Whether you run a one person session or work with a partner, keep roles strict—the sender avoids feedback and the receiver works without sight or cues.

Expect random clusters; weigh your results against published evidence and repeat any notable run under the same conditions before drawing conclusions.

If you want to develop skills, schedule regular practice and track day-to-day changes. For guided exercises to help you develop your abilities, see develop your abilities.